When Does Human-Centered Design Step Into AI? Now.

That realization came full circle for me.

The work I did in the 1970s with the Human Factors group at Xerox PARC ultimately became the foundation for Psycho-Aesthetics®. Long before AI entered the conversation, we understood something that still holds true today: technology consistently advances faster than our understanding of human perception, emotion, and motivation.

AI is now extraordinarily powerful. But it still cannot explain why people care, trust, adopt, or resist. And across decades of work, I've learned that this is where most initiatives quietly fail.

Across enterprise transformation, digital programs, and strategy execution, credible research consistently shows that roughly 60–70% of major initiatives fail or materially underperform. Not because the technology was inadequate—but because humans never fully aligned around meaning, value, risk, or intent. Organizations build impressive systems that people never truly adopt.

That is not an AI problem.

It is a human intelligence problem.

To stay relevant—and to do the right thing for people and the companies that serve them—we needed a rigorous, validated foundation that could guide AI rather than be driven by it.

In 1992, I formalized Psycho-Aesthetics®, a human-centered methodology designed to decode how people perceive meaning and make decisions. Over the decades, it has been refined through two Harvard publications, a Wharton publication, two books, thousands of pages of research, and nearly a hundred major programs across industries.

What eventually became clear was this: if human perception, emotion, and symbolic meaning drive adoption, then they must become governing inputs—not post-hoc considerations.

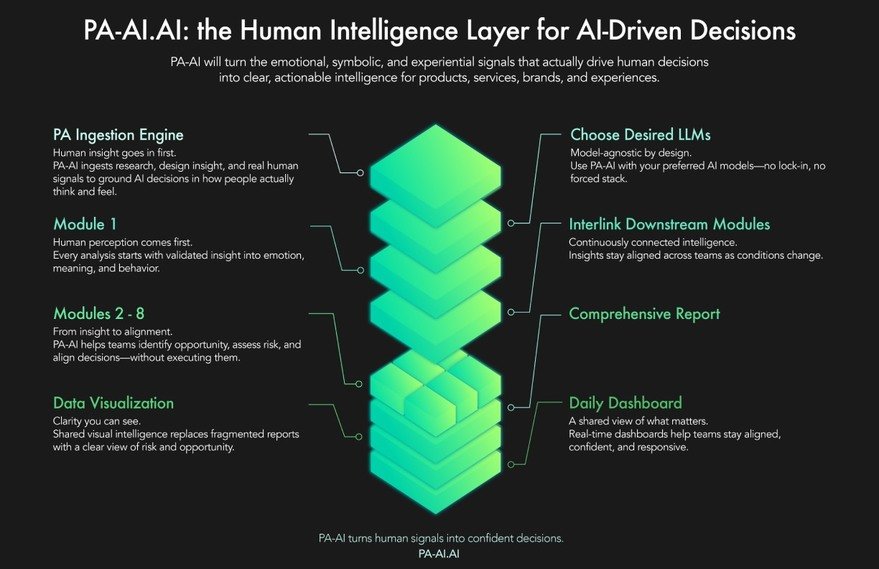

PA-AI emerged from that realization.

I often describe AI as a powerful engine—but without a human machine interface, transmission, or wheels on the ground. PA-AI provides that human intelligence layer. It translates perception, emotional resonance, and symbolic meaning into decision logic that AI systems can actually use—bringing direction, control, and clarity to outputs that otherwise remain abstract.

Having incubated companies across categories—from a legendary guitar brand to fintech—and serving on multiple product and technology boards, I've seen the same failure pattern repeat itself. Teams never fully align on what "success" means. Decisions get made without understanding human adoption. Politics overrides clarity. Risks surface too late. And once execution begins, there are no meaningful feedback loops to detect drift or resistance.

PA-AI was designed to attack those failure modes directly—before capital is committed, reputations are at stake, and momentum is lost.

One of the most powerful outcomes has been team alignment, especially in a world of globally distributed organizations. To support that, we built a living intelligence system that delivers continuous insight rather than static reports. At its core is SPS®—the Success Potential Score—a proprietary metric that evaluates the likelihood of success by measuring human perception, emotional resonance, symbolic meaning, and adoption drivers in real time.

This is PA-AI.

We're currently running proof-of-concept programs with clients, with more than a dozen completed and another dozen underway. What's emerging is not just better prediction—but better decision behavior.

The next era of AI will not be defined by capability. It will be defined by whether what we build is adopted, trusted, and sustained.

That is a design problem.

And it always has been.

-

oFavorite This

-

QComment

K

{Welcome

Create a Core77 Account

Already have an account? Sign In

By creating a Core77 account you confirm that you accept the Terms of Use

K

Reset Password

Please enter your email and we will send an email to reset your password.